Adobe Digital University Learning Completions

Learning completions were low, and surveys showed that learners found Digital University to be fragmented and difficult to follow.

My team redesigned the platform to increase engagement and promote more learning completions.

Context

Sales employees learn about new products and sales techniques through Adobe Digital University (ADU), however the number of people completing their training has always been low. When we ran a survey to find out why, we discovered that learners found the content to be hard to consume and hard to track, due to content being hosted on many different platforms.

My team took on a massive project to consolidate content on the Adobe Experience Manager CMS platform, which would allow us to design and standardize the learning content presentation on ADU.

My Role

I led the design efforts for this project as a UX designer and lead. I created the wireframes and mockups needed to communicate my ideas for the learning journey design to teammates, stakeholders and developers. I conducted several of our discovery interviews and user tests, and collaborated with my teammates to analyze the results.

I also served as the product owner for the engineering team due to my background in front-end web development and agile.

Objectives

- Increase employee engagement with learning content on Adobe's Digital University platform.

- Increase completions of learning content.

Results

The new Digital University learning journeys were well-received by learners and led to highest-ever completion rates of training by the field, hitting 95% and above, a benchmark that had never been achieved before beginning this project.

Building the foundation through research

In order to determine what design might work best for our standardized learning format, my team interviewed twenty users in a semi-structured format from around the world (an approach that had never been tried before in this group). We wanted to get a sense for how they were currently consuming learning content and how it might be improved. In this way, we were able to identify several opportunities for design, including:

Consolidating the content

Because training could be found in many different places and on many different platforms, users expressed confusion about where they might find the content they were looking for. We needed to consolidate it all into a single platform on Digital University.

A single, simple structure

Users also expressed frustration about having to constantly learn a new interface in order to take the training they were assigned. They wanted to have a single format that was easy to use so they didn't have to spend time trying to figure out how the on-demand training worked.

Enhancing the engagement

Many users described the platforms that we were using as unwelcoming and uninspired. We needed to build something that increased their level of engagement by putting the emphasis on making the content accessible and even fun.

Creating the connection

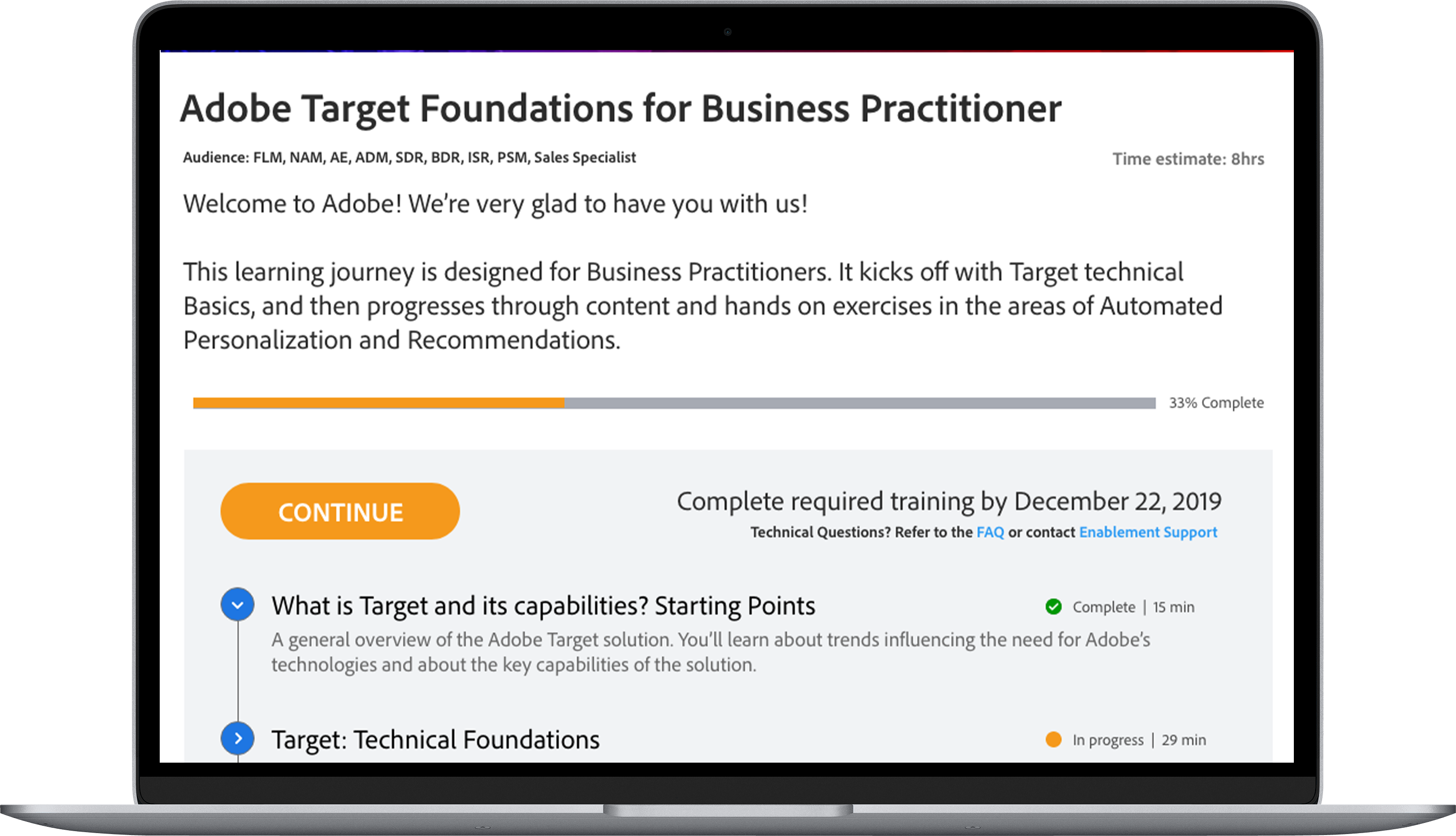

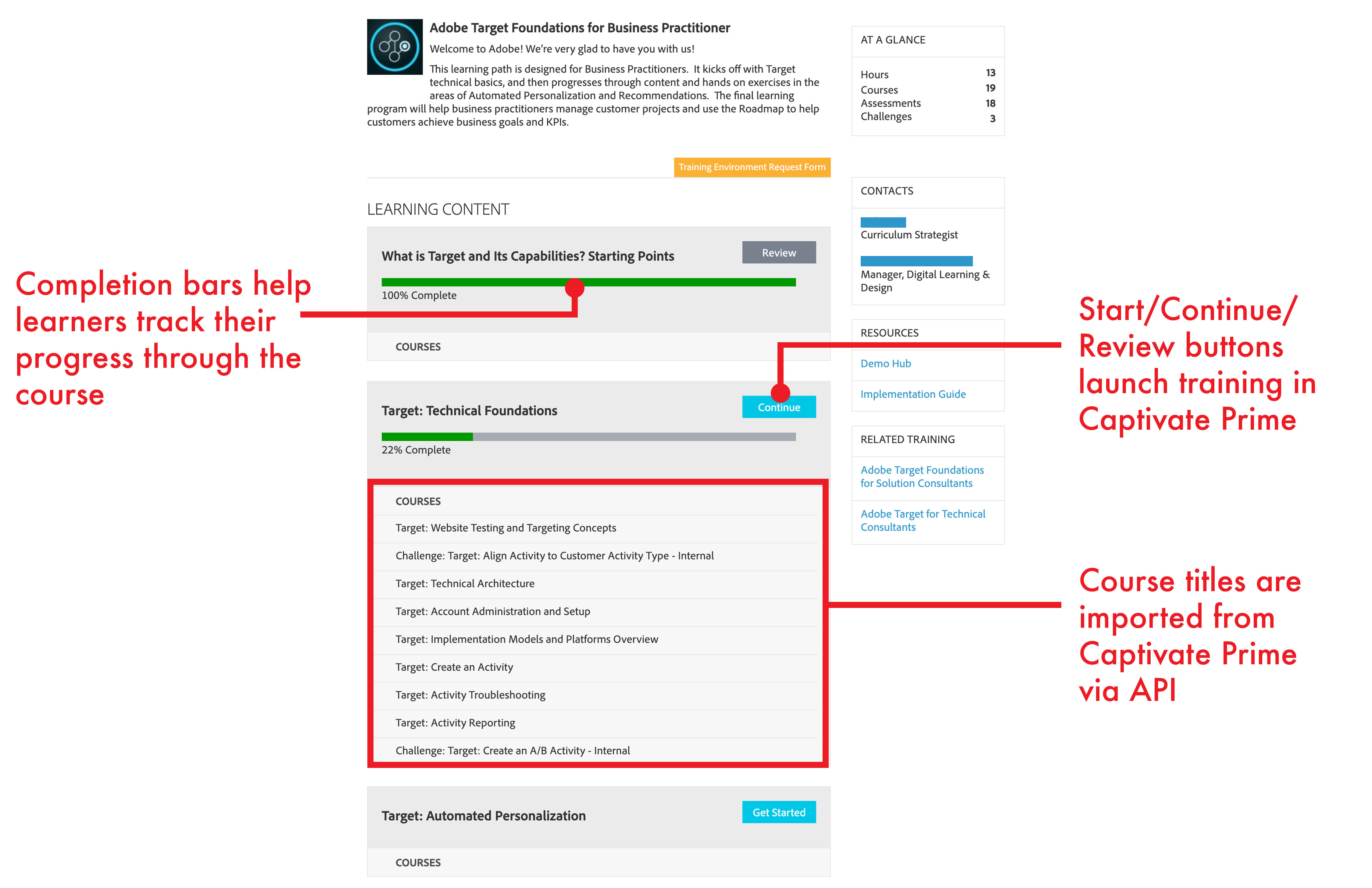

The first step we needed to take was to create a connection between Adobe Digital University and the various learning platforms. Because much of the training was being migrated to Captivate Prime, we started by building a simple link between ADU to CP using their API. We could access course descriptions and monitor progress, but users were still taken into Captivate Prime to take their training. This version served as a proof of concept to show that the integration between the two platforms was possible.

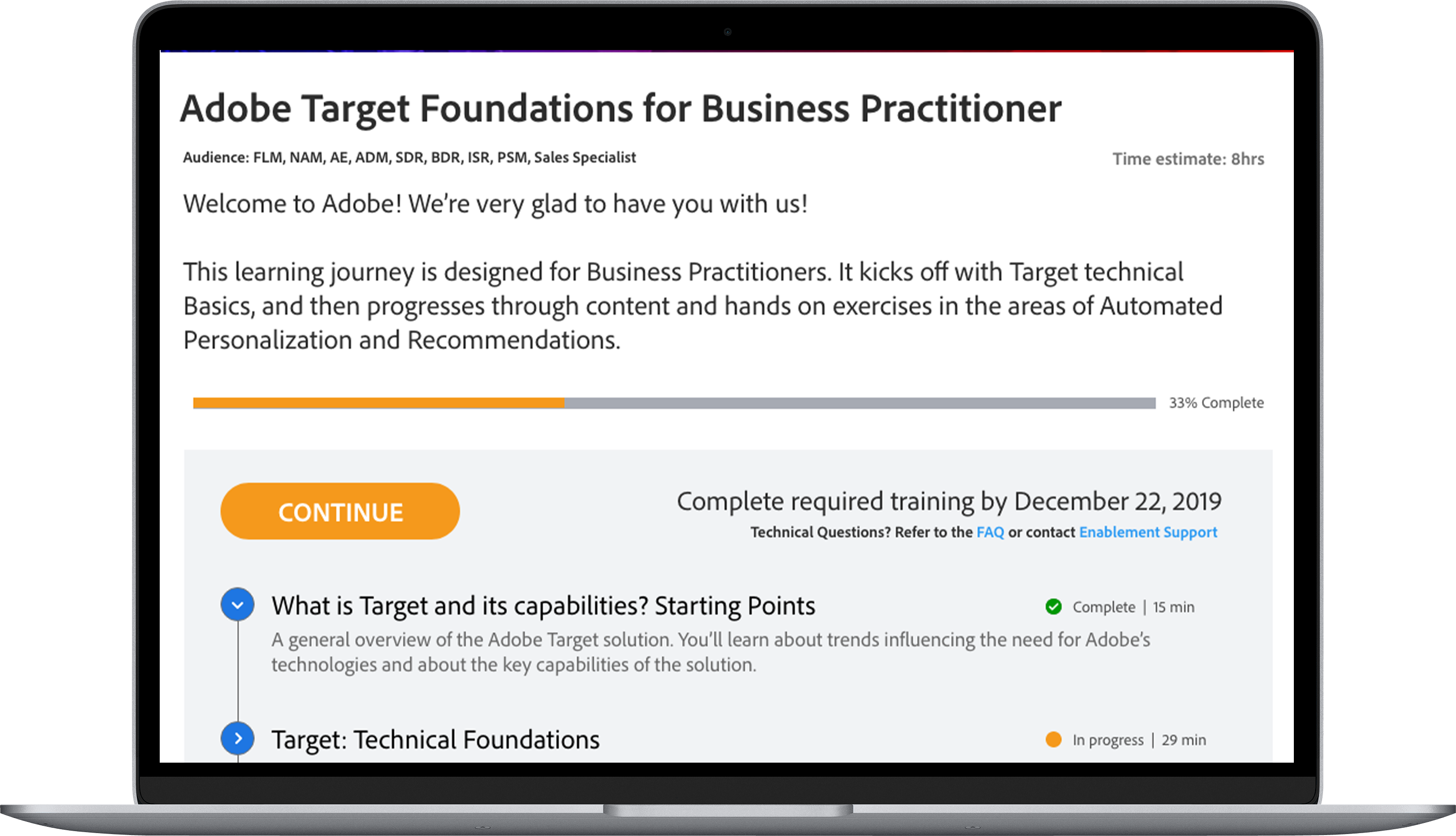

Personalized progress

The first version of the Digital University learning journey was limited in scope since it was the first exploration into how to consolidate content onto ADU. Learners could see the courses they needed to take and track their progress, but they had to take the actual courses on a different platform. Though we had many more features planned, these were selected first for technical reasons, due to the technical ease of integration.

An in-page player

After our first learning journey experience was released, we checked in again with learners by running more semi-structured interviews. Learners noted in our user studies that the experience was still disjointed - they could see some of their progress in ADU but they had to take courses using a completely different interface. The next step was to bring the actual learning experience into ADU, which was a much bigger and more complex integration, both in terms of design and a development.

The player window

The player window was finally placed on the page in Digital University. Users could watch videos and take quizzes without ever having to leave ADU.

The table of contents

Alongside the player window, the table of contents helped users to navigate through the training content. Collapsible drill-downs allowed learners to have both a high-level and a detailed view of the content, and they could quickly and easily find the content that they wanted to focus on.

The learning journey progress bar

While we had already implemented a progress bar for individual learning modules, version 2.0 implemented the first "overall" progress bar, one of the most requested features from our user studies.

Expanding the experience

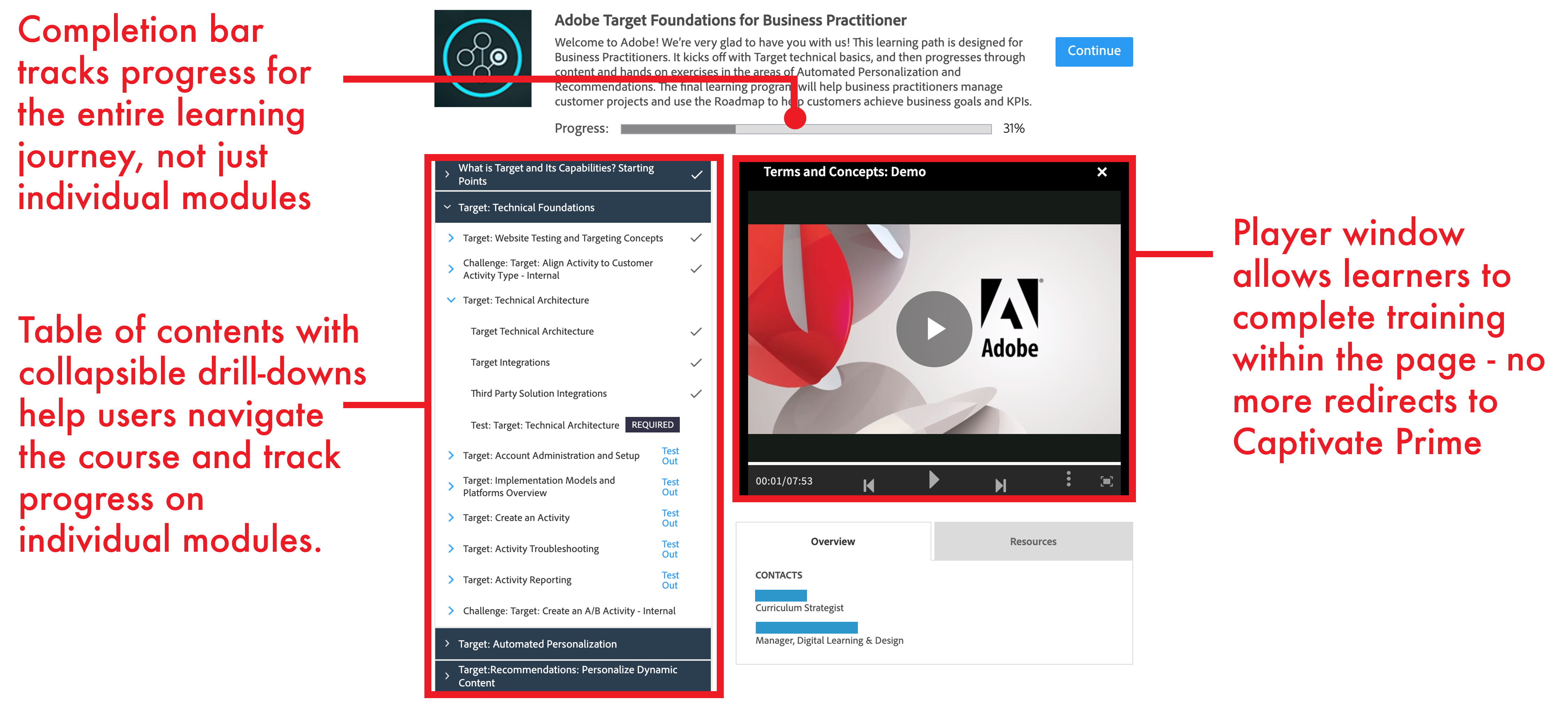

While our second version was well received generally, our user testing revealed more issues that needed to be addressed. One of the biggest issues was that the size of the player window was too small, and even though there was a "full-screen" button, many learners did not discover it on their own. Additionally, users often found progress hard to track on individual items.

Widening the window

Version 3.0 introduced a much larger, full-width window that made watching videos, reading text and clicking on items in quizzes much easier. The table of contents was moved below the content window to accommodate the full width player.

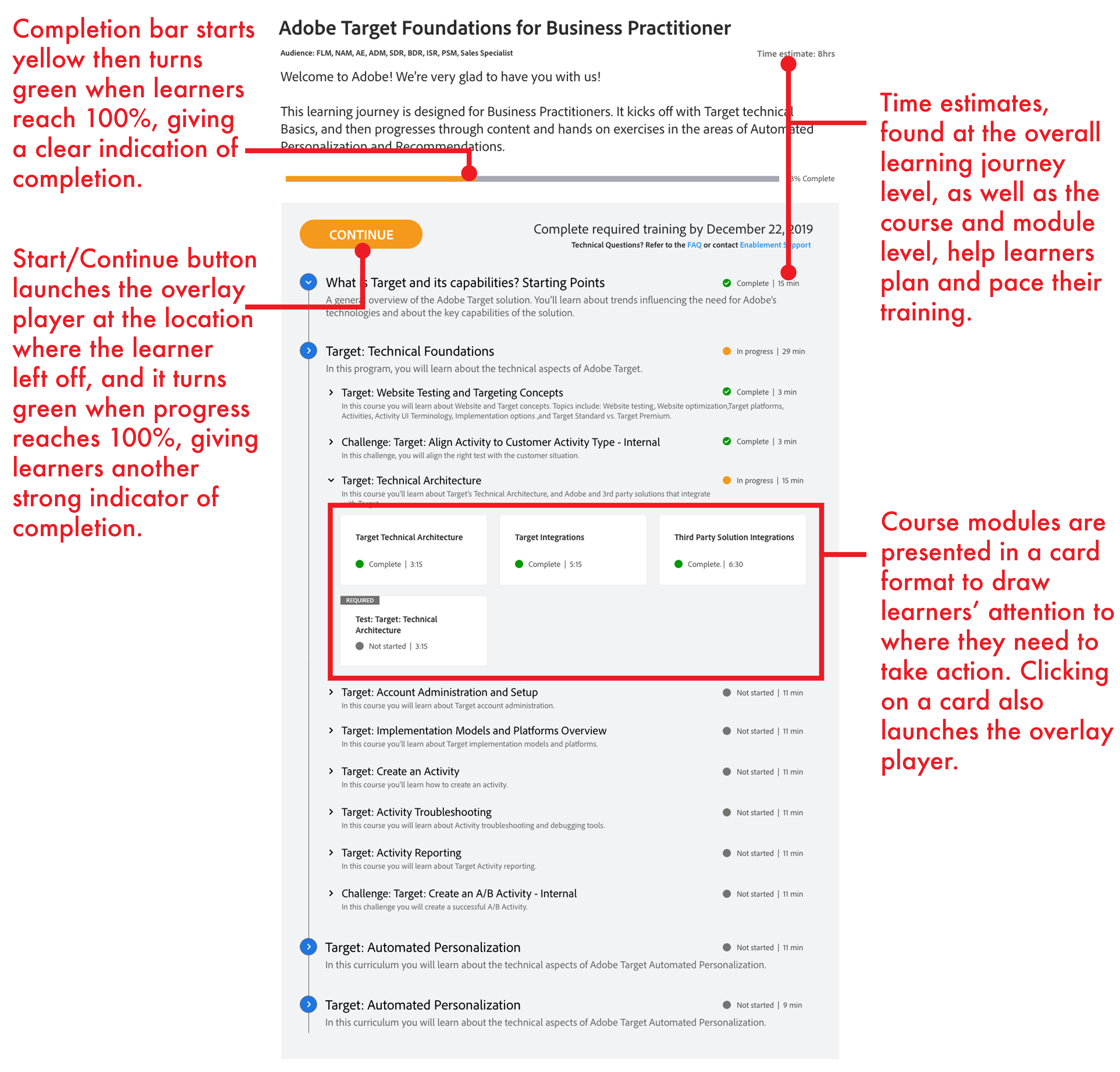

Going green

Individual module completion markers were all enlarged and followed a progression from grey (not started) to orange (in progress) to bright green (complete). Our studies showed that making completion more prevalent helped users track their progress better and gave them a sense of accomplishment.

Added info

We discovered that learners needed more information to help them decide whether or not to take a learning journey. Things like target audience, deadline, and a help link were all added to the layout.

Emphasizing engagement

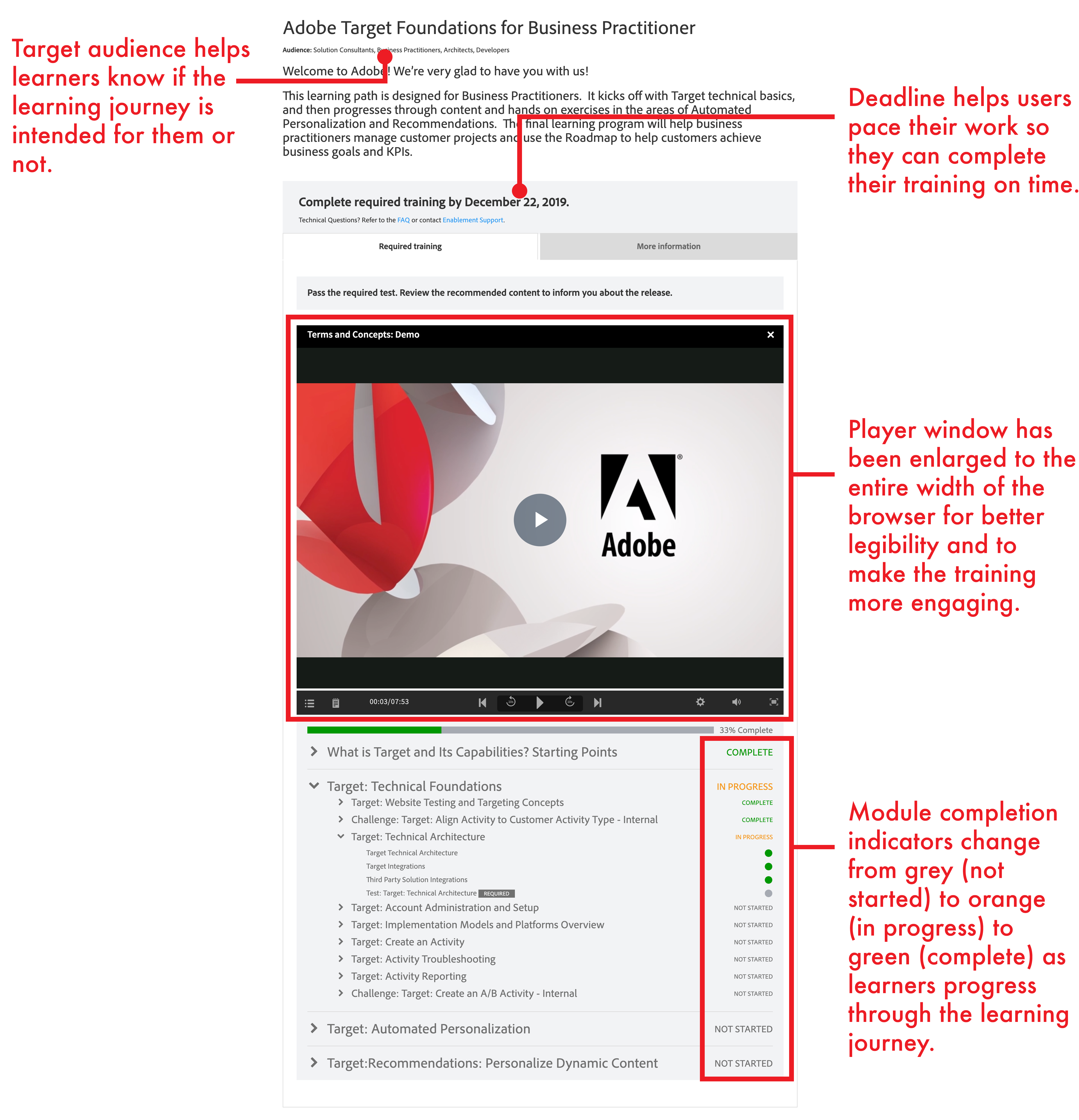

User studies showed that the third version was a vast improvement over the second, but there was still room for improvement. One of the biggest issues was that the player window got to be so large that it pushed the table of contents too far down the page, forcing users to scroll past it when they wanted to explore the course. Since users indicated that they typically like to explore the course before starting into it, we had to re-think the layout.

Surfacing the specifics

We removed the player window from the page altogether and brought the table of contents up to the top. This allowed users to start exploring the course as soon as they landed on the page without having to scroll.

The overlay player

Also at the top of the page is a prominent "start"/"continue" button. When the user is ready to jump into the course content, they can click on this button to launch a full-screen overlay player. The overlay player fills the entire browser screen and offers a much more engaging experience for learners.

Module cards

In an effort to make the table of contents even more engaging, we moved the individual modules from a simple list to a more eye-catching card format, since that is the level that users should be paying most attention to.

Time estimates

Users indicated that it was often frustrating to open a training and discover it was much longer than they had anticipated. In order to address this concern, we also added time estimates for each module to help learners plan their training and know what to expect. This has helped learners to better engage with the content when they are ready to engage.

The impact of Digital University Learning Journeys

The Digital University learning journeys have been well-received by learners and have led to highest-ever completion rates of training by the field, often hitting 95% and above, a benchmark we had never achieved before beginning this project. The latest learning journey design iteration has been applied to all courses on Digital University, and we continue to enjoy high levels of satisfaction with the training that our learners are completing.

Lessons learned

Iteration station

Each user study that we did on each iteration of this tool led to more insights about what learners were looking for and ways that we could improve the product. This process led us in directions that we could not have anticipated at the beginning of the process, and helped us to build something truly useful and engaging for our users.

Lead by design

As the lead designer on this project, I learned how to communicate and present my ideas morre effectively to both my teammates and my stakeholders. Customizing my messaging about the design to the things I knew they cared about helped me to gain the buy-in I needed to move the project forward.

The importance of relationships

As product owner, I knew I needed to get a lot of output from my developers in a fairly short amount of time. I invested a lot of time into building a rapport with them, ensuring that communication channels were always open so that they would feel comfortable coming to me with any issues they were facing so we could resolve them quickly.